In the last few months, I have been reading quite a bit about machine translation. And I also took the opportunity at the recent LocWorld in Seattle and the ATA conference in San Diego to attend sessions on MT.

In Seattle, TAUS presented several real-world examples of what can today be done with the Moses engine. It was refreshing to hear from experts on statistical MT that terminology matters, since that camp, at least at MS, had largely been ignorant to terminology management in the past. Here are a number of worthwhile tutorials on the TAUS site for those who’d like to stay abreast of developments.

At the ATA, the usual suspects, Laurie Gerber, Rubén de la Fuente, and Mike Dillinger, outdid each other once again in debunking the myth around MT. When fears did come up in the audience about MT and its effects, I had to think of a little story:

At the ATA, the usual suspects, Laurie Gerber, Rubén de la Fuente, and Mike Dillinger, outdid each other once again in debunking the myth around MT. When fears did come up in the audience about MT and its effects, I had to think of a little story:

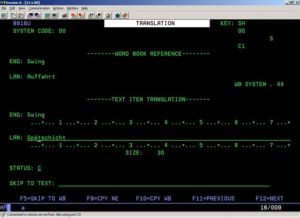

In the mid-90s, five of us German translators at J.D. Edwards were huddled in a conference room for some training. Something or someone was terribly delayed, and while chatting we all started catching up on the translation quota due that day. You know what that involved? It involved finding a string that came up 500 to 800 times. After translating it once, you could continue your chat and hit enter 500 to 800 times. See the screen print of the translation software to the right and you will realize that the software didn’t allow a human to translate like a human; we translated like machines, only worse because…oops, the 800 strings are through and you are on to the next source string. Some would call this negligent behavior and I am glad that today we have better translation software and that machines assist with or do the jobs that we are not good at.